Case Study: ZofAI

Client: ZofAI — Autonomous reliability infrastructure powered by 40+ specialized AI agents

Industry: AI-powered software testing & reliability

Challenge: Enterprise-grade product, but a website that search engines couldn't properly read

Engagement: Ongoing

The Fundamental Problem

ZofAI had built a genuinely differentiated product: autonomous reliability infrastructure with 40+ specialized AI testing agents that validate changes across 19 reliability dimensions before production. Enterprise-grade technology. SOC 2 Type II, GDPR, and HIPAA compliant.

But their website was invisible to search engines — and not because of content quality. The technical foundation was broken.

What our audit uncovered

When we ran Wrodium's technical GEO audit on zof.ai, we found something we don't see often: a company preparing for an enterprise-ready site transition with almost none of the infrastructure in place to be found.

- Multiple fragmented sitemaps with no topical clustering — ZofAI had multiple XML sitemaps, but they were disjointed and inconsistent. Pages were dumped into a single flat list with no logical grouping — products mixed with blog posts mixed with legal pages. No topical clusters for crawlers to follow.

-

Sitemap pointing at localhost — The most critical issue: their primary sitemap contained URLs pointing to

localhost:3000instead ofhttps://zof.ai. Search engines were being told to index development URLs that don't exist on the public internet. - No robots.txt — ZofAI had no robots.txt file at all. Without it, crawlers had no guidance on what to index, how often to crawl, or where the sitemap was located.

- No llms.txt — No structured file for LLMs. ChatGPT, Perplexity, Claude, and Gemini had no way to navigate the site's content or understand the product hierarchy — meaning ZofAI was invisible to AI-powered search and discovery.

- Weak metadata across pages — Titles and descriptions were generic, didn't target specific search intents, and pages weren't built to reference each other.

- Pages existed in isolation — Product pages, solution pages, and docs were siloed with no interlinking strategy. No page built authority for the next.

- No cache optimization — Pages weren't leveraging browser caching or CDN edge caching. Every visit was a full server round-trip, resulting in slow load times and poor Core Web Vitals — both of which hurt crawl efficiency and ranking potential.

The localhost sitemap alone meant that every time Google or Bing crawled ZofAI's sitemap, they were hitting dead URLs. Months of potential indexing — wasted.

What the broken sitemap looked like

<!-- BEFORE: ZofAI's sitemap was pointing at localhost -->

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>http://localhost:3000/</loc>

<lastmod>2025-11-15</lastmod>

</url>

<url>

<loc>http://localhost:3000/product</loc>

<lastmod>2025-11-15</lastmod>

</url>

<!-- Every URL was a dead link to a dev server -->

</urlset>

What Wrodium Implemented

We treated ZofAI's engagement as a full enterprise site architecture buildout — not just content optimization. The product was ready for enterprise buyers; the website needed to match.

1. Technical SEO: Sitemap Division into Topical Clusters

Rather than just fixing the broken sitemap, we took the opportunity to do it right. We replaced ZofAI's flat, fragmented sitemaps with a sitemap index that divides URLs into topical clusters — giving search engine crawlers a clear map of the site's content architecture.

Every URL was corrected from localhost:3000 to the production domain https://zof.ai, and then organized by topic.

How we divided the sitemap

- Replaced all

localhost:3000references withhttps://zof.ai - Created a sitemap index (

sitemap.xml) that references 3 topical cluster sitemaps:- Products & Platform cluster — Platform overview, System Graph, Agent Framework, Workflow Engine, AI Test Generation, Reliability Scoring, Integrations, Security, Pricing

- Solutions & Use Cases cluster — CTO, VP Engineering, QA Leaders, SRE Teams, Financial Services, Healthcare, E-commerce

- Content & Resources cluster — Blog posts, Documentation, Quickstart, API Reference, Guides, Videos

- Added proper

<lastmod>,<changefreq>, and<priority>values per cluster - Created an HTML sitemap (zof.ai/sitemap) for human navigation

Why clusters matter: When Google crawls a sitemap divided into topical clusters, it understands content relationships before it even visits the pages. The Products cluster tells crawlers "these pages are related" — which reinforces topical authority. A flat sitemap with 50+ random URLs gives crawlers zero context. Clusters give them a thesis.

Within days of deploying the clustered sitemap, Google Search Console showed a dramatic increase in indexed pages. URLs that had been ignored for months were now being crawled, understood in context, and indexed properly.

2. robots.txt — From Zero to Enterprise-Grade

ZofAI had no robots.txt before working with us. That means crawlers had no instructions — no sitemap pointer, no crawl directives, no guidance whatsoever.

What we created

- A production

robots.txtwith properUser-agentdirectives - Sitemap declaration so every crawler finds the canonical sitemap automatically

- Selective disallow rules for internal/admin paths that shouldn't be indexed

- Crawl-delay considerations for responsible bot behavior

3. llms.txt — Improving AI Navigability (Including ChatGPT Search)

As ZofAI was preparing for an enterprise-ready site transition, we recommended something most companies haven't implemented yet: an llms.txt file — and then we focused on making it genuinely useful for AI navigation, not just a flat list of links.

The llms.txt standard is an emerging protocol that helps large language models — including ChatGPT, Claude, Perplexity, and Gemini — understand a website's structure, navigate its content, and cite it accurately. Think of it as a robots.txt — but for LLMs instead of search engine crawlers.

Why ChatGPT matters here

ChatGPT has become a primary discovery channel for enterprise buyers. When a CTO asks ChatGPT "What are the best AI testing platforms?" or "autonomous reliability tools for enterprise," ChatGPT browses the web, reads site content, and decides what to cite. Without an llms.txt, ChatGPT has to crawl pages one by one and guess the site's structure. With a well-structured llms.txt, ChatGPT can immediately understand the full product offering, who it's for, and which page to cite — before visiting a single URL.

The same applies to ChatGPT's search feature (SearchGPT), which actively browses and synthesizes web content in real-time. A navigable llms.txt is the difference between being cited accurately and being skipped entirely.

How we improved navigability

Most llms.txt files are just dumped link lists. We structured ZofAI's llms.txt as a hierarchical navigation layer that mirrors how LLMs like ChatGPT actually process information — top-down, from identity to capabilities to evidence:

- Identity block first — Company name, one-line description, and key differentiator at the very top so ChatGPT and other LLMs immediately understand what ZofAI is before seeing any links

- Key Facts section — 40+ agents, 19 reliability dimensions, SOC 2 / GDPR / HIPAA compliance — quantified claims that LLMs can extract and cite directly in responses

- Products directory (grouped) — System Graph, Agent Framework, Workflow Engine, AI Test Generation, Reliability Scoring, Integrations, Security, Pricing — each with a one-line descriptor so ChatGPT knows what each link contains before visiting it

- Solutions by persona — For CTOs, VPs of Engineering, QA Leaders, SRE Teams — grouped so when ChatGPT detects a user is a CTO asking about reliability, it can go directly to the CTO page

- Solutions by industry — Financial Services, Healthcare, E-commerce — enabling ChatGPT and other LLMs to serve industry-specific answers

- Documentation — Quickstart, API Reference, CI/CD Integration — providing technical validation depth that LLMs use to assess credibility

- Company, Legal & Resources — About, Careers, Terms, Privacy, DPA, Security Practices, Blog, RSS Feed

- Full directory link — Points to

llms-full.txtfor complete page-level detail when ChatGPT or other LLMs need deeper context

The navigability difference: When someone asks ChatGPT "What is ZofAI?" or "best AI testing platforms for enterprise," the llms.txt doesn't just give ChatGPT a list of URLs — it gives it a structured roadmap with context at every level. ChatGPT can understand ZofAI's entire value proposition — products, personas, industries, compliance — without needing to crawl every page. The same applies to Claude, Perplexity, and Gemini. Better navigability means more accurate citations, more relevant recommendations, and higher placement in AI-generated answers across every LLM.

4. Metadata Optimization for Search Intents

ZofAI's page titles and meta descriptions were generic. A product page might say "ZofAI — Product" without specifying what the product does or who it's for. This meant they were competing for nothing — no specific search intent was being targeted.

What we changed

We rewrote metadata across every page to target specific search intents that enterprise buyers actually search for:

"ZofAI — Solutions"

"ZofAI — Documentation"

"AI Testing for CTOs — Executive Reliability Strategy | ZofAI"

"AI Test Generation — Automatic Test Case Generation | ZofAI"

"SRE-Grade Reliability Validation for Enterprise Systems | ZofAI"

Each title now targets a specific search intent — "AI testing platform," "autonomous reliability," "AI test generation," "SRE reliability validation" — terms that enterprise engineering leaders actually type into Google, ChatGPT, and Perplexity.

5. Cache Optimization — Speed as a Ranking Signal

ZofAI's site had no caching strategy. Every page visit triggered a full server round-trip — no browser caching, no CDN edge caching, no static asset optimization. For a site with 50+ pages and enterprise buyers evaluating the platform, this meant slow load times, poor Core Web Vitals, and wasted crawl budget.

What we optimized

- Browser cache headers — Set proper

Cache-ControlandETagheaders for static assets (CSS, JS, images, fonts) so returning visitors load pages near-instantly from local cache - CDN edge caching — Configured edge caching rules so pages are served from the nearest CDN node rather than the origin server, cutting TTFB (Time to First Byte) dramatically

- Immutable asset fingerprinting — Static assets now include content hashes in filenames, enabling aggressive long-term caching without stale content risk

- HTML cache strategy — Short-lived cache for HTML pages with

stale-while-revalidateso content stays fresh while still being served fast - Image optimization — Compressed and converted images to modern formats with responsive sizing, reducing page weight significantly

Why cache matters for SEO: Google explicitly uses Core Web Vitals as a ranking signal. Faster pages get crawled more efficiently (better crawl budget utilization), rank higher in search results, and provide better user experience — which reduces bounce rates. For an enterprise site like ZofAI with 50+ pages, the cumulative effect of proper caching on crawl efficiency and ranking is substantial.

6. Page Architecture — Making Pages Build on Each Other

The biggest structural change we made was transforming ZofAI's pages from isolated islands into a reinforcing content ecosystem. Every page now builds authority for the pages around it.

The architecture we designed

We organized ZofAI's 50+ pages into a layered hierarchy where each level strengthens the next:

- Layer 1: Platform Overview — The main product page establishes what ZofAI is and links down to every product feature

- Layer 2: Product Features — System Graph, Agent Framework, Workflow Engine, AI Test Generation, Reliability Scoring — each page targets a specific capability and links to relevant solutions

- Layer 3: Solutions by Persona — For CTOs, VPs of Engineering, QA Leaders, SRE Teams — each page speaks to a specific buyer's problems and links to the product features that solve them

- Layer 4: Solutions by Industry — Financial Services, Healthcare, E-commerce — each page contextualizes the platform for specific compliance and reliability requirements

- Layer 5: Documentation — Quickstart, API Reference, CI/CD Integration — each doc page validates the product claims made at higher levels

- Layer 6: Blog & Resources — Engineering insights and reliability thought leadership that drives topical authority back up the chain

How this compounds: When an SRE searches for "AI reliability validation for enterprise," the Solutions page ranks because it's reinforced by the Product pages above it, validated by Documentation below it, and supported by thought leadership from the Blog. No page exists alone — every page makes every other page stronger.

Before and After: The Full Picture

-

Before: Multiple fragmented sitemaps pointing at

localhost:3000 -

After: Sitemap index with 3 topical clusters (Products, Solutions, Content) + human-readable

/sitemappage, all URLs production-correct

-

Before: No

robots.txt— crawlers had zero guidance -

After: Enterprise-grade

robots.txtwith sitemap declaration and proper directives

-

Before: No

llms.txt— AI systems couldn't navigate the site - After: Hierarchical llms.txt with identity block, key facts, grouped products, persona-matched solutions, and full directory link — built for AI navigability

- Before: No caching — every visit was a full server round-trip

- After: Browser cache headers, CDN edge caching, immutable asset fingerprinting, and image optimization — dramatically improved Core Web Vitals and crawl efficiency

- Before: Generic metadata like "ZofAI — Product"

- After: Intent-driven metadata targeting enterprise search queries across every page

- Before: Isolated pages with no interlinking strategy

- After: 6-layer page architecture where every page reinforces every other page's authority

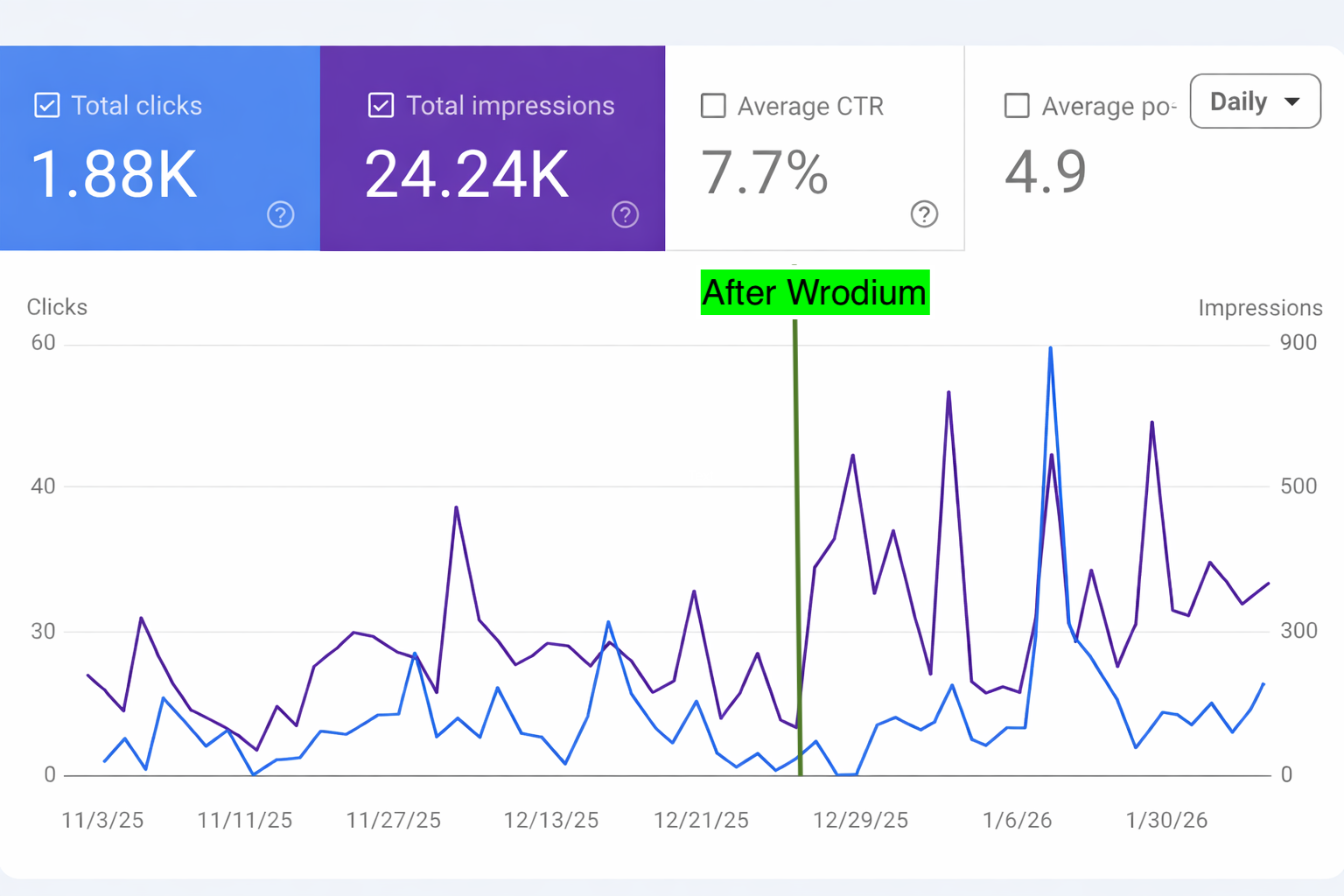

The Results

The combination of fixing the broken technical foundation and building an enterprise-grade content architecture produced compounding results.

Ranking #1 on enterprise search intents

ZofAI now ranks #1 on several high-intent search queries that enterprise engineering leaders use when evaluating reliability and testing infrastructure. These aren't vanity keywords — they're the exact terms CTOs, VPs of Engineering, and SRE leads search before making purchasing decisions.

The rankings are driven by the page architecture we built: each ranking page is reinforced by the layers above and below it. A Solutions page ranks because the Product pages validate its claims, the Documentation proves the technical depth, and the Blog establishes thought leadership.

From invisible to enterprise-ready

Before Wrodium, ZofAI's site was technically invisible. The sitemaps pointed at localhost, there was no robots.txt, no llms.txt, no caching, and no structured metadata. Search engines and AI systems had no way to properly discover, crawl, or understand the site.

After Wrodium, ZofAI has:

- A clustered sitemap index with topical groupings that give crawlers context before they visit a single page

- A robots.txt guiding crawler behavior

- A navigability-first llms.txt that AI systems use to understand and cite the platform accurately

- Optimized caching with browser cache headers, CDN edge caching, and asset fingerprinting for fast load times and better crawl efficiency

- Intent-driven metadata on every page targeting specific enterprise buyer queries

- A reinforcing page architecture where 50+ pages build authority for each other

- #1 rankings on multiple search intents that matter to their enterprise buyers

"Wrodium didn't just fix our technical issues — they built the search architecture we needed for enterprise readiness. They divided our sitemap into topical clusters, improved our llms.txt navigability so AI engines can actually understand us, optimized our caching infrastructure, and rewrote our metadata for real search intents. We went from localhost sitemaps to ranking #1 on the exact queries our buyers use."

Key Takeaways

Technical foundations matter more than content volume

ZofAI already had strong content. The problem was that search engines literally couldn't find it. A sitemap pointing at localhost means zero indexing — no matter how good your content is.

robots.txt and llms.txt navigability are table stakes for enterprise sites

If you're selling to enterprise buyers, your site needs to be navigable by both search engine crawlers and LLMs. robots.txt guides Google and Bing. llms.txt guides ChatGPT, ChatGPT Search, Claude, Perplexity, and Gemini. But navigability isn't just having the file — it's structuring it so LLMs can quickly find the right content for any query. ChatGPT is now a primary product discovery channel for engineering leaders. If it can't navigate your site, you don't get cited.

Cache optimization is a ranking signal most teams ignore

Fast-loading pages get crawled more frequently, rank higher in Core Web Vitals assessments, and convert better. For a 50+ page enterprise site, the cumulative effect of proper browser caching, CDN edge caching, and asset optimization on crawl efficiency and rankings is massive — and it's one of the easiest technical SEO wins to implement.

Pages that build on each other compound faster than isolated pages

ZofAI's rankings accelerated because their page architecture creates internal reinforcement. Each product page strengthens the solutions pages. Each solutions page validates the platform overview. Documentation proves technical depth. Every page makes every other page rank better.

Metadata should target search intents, not brand names

"ZofAI — Product" doesn't rank for anything. "AI-Powered Autonomous Reliability Infrastructure" ranks for the exact query an engineering leader types into Google. Metadata is the first thing search engines read — it needs to match what buyers are actually searching.

Enterprise readiness starts with search readiness

ZofAI was preparing to transition to an enterprise-ready site. We ensured that the technical and architectural foundation matched the ambition. Enterprise buyers use search to evaluate tools. If they can't find you, your product doesn't exist to them.